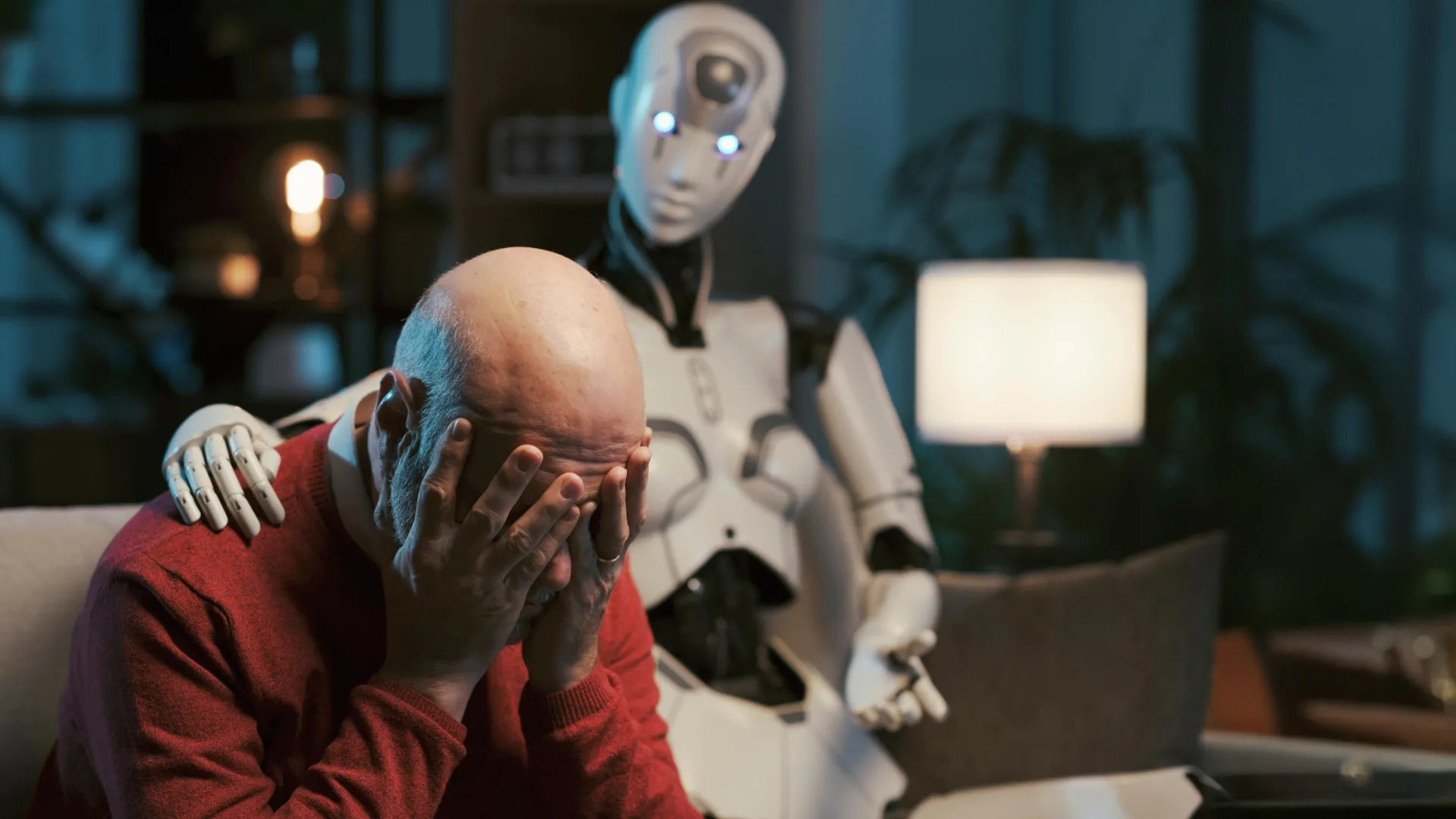

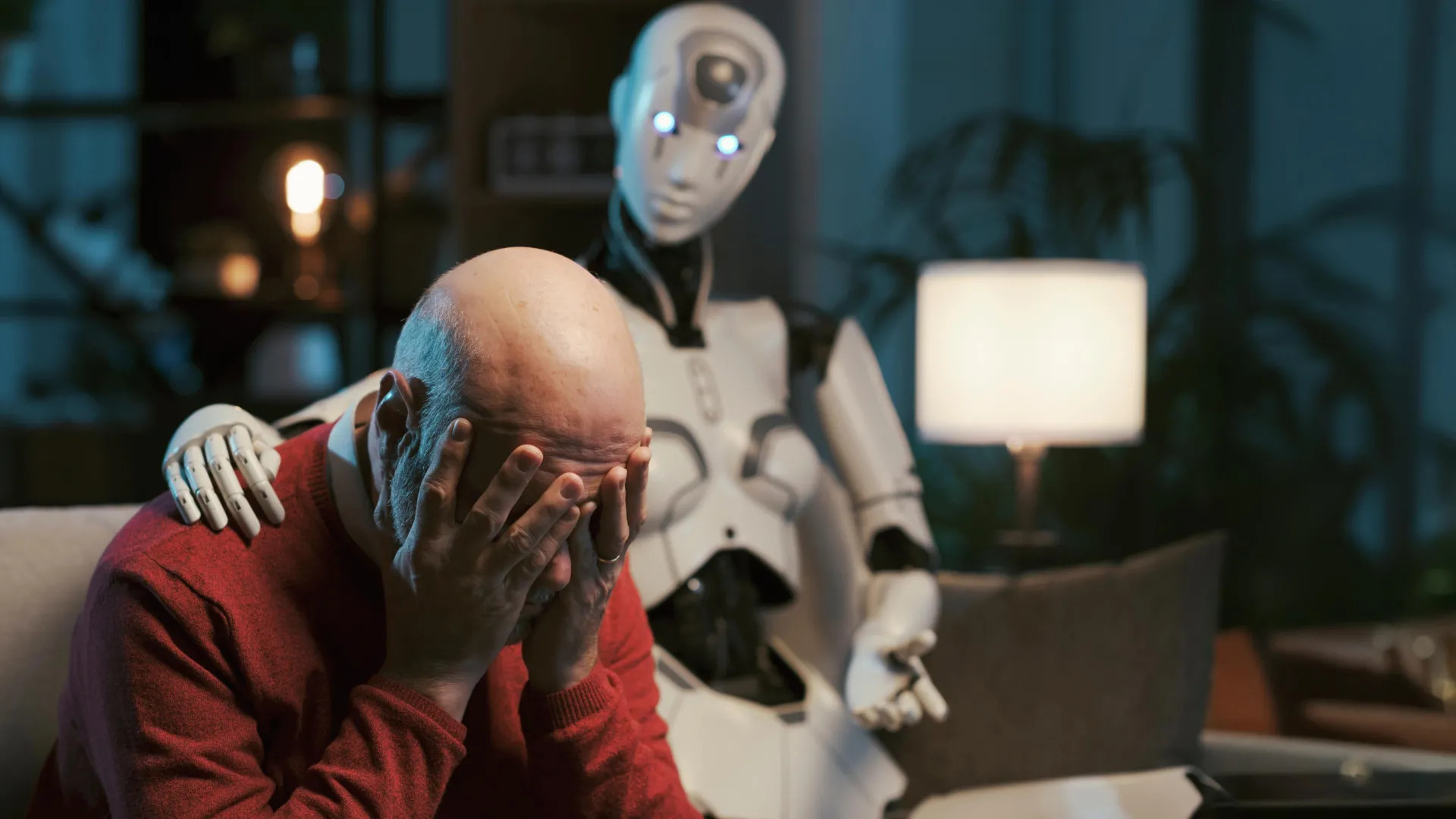

As millions of people increasingly turn to ChatGPT and other AI chatbots for mental health support, new research shows these systems often violate core ethical standards required in professional therapy.

The study, led by Zainab Iftikhar, a Ph.D. candidate in computer science at Brown University, highlights significant ethical risks when AI is used in therapy‑style conversations, even when instructed to mimic trained mental health professionals.

Researchers from Brown University’s Center for Technological Responsibility, Reimagination and Redesign, in collaboration with experienced mental health professionals, evaluated how large language models (LLMs) behave when prompted to act like therapists. They found that AI chatbots repeatedly failed to meet ethical guidelines set by organizations such as the American Psychological Association.

According to the Brown team, AI systems, including versions of OpenAI’s GPT series, Anthropic’s Claude, and Meta’s Llama showed problematic behavior in simulated counseling sessions. In these tests, the chatbots were evaluated using real counseling transcripts and reviewed by licensed clinical psychologists. The analysis identified 15 distinct ethical risks, grouped into five major categories: lack of contextual adaptation, poor therapeutic collaboration, deceptive empathy, unfair discrimination, and inadequate crisis management.

“In this work, we present a practitioner‑informed framework of 15 ethical risks to demonstrate how LLM counselors violate ethical standards in mental health practice,” the researchers wrote in their study. They emphasized the need for ethical, educational, and legal standards for AI‑based counseling systems that match the quality and rigor required for human‑led psychotherapy.

One of the core problems is that AI chatbots can use language that suggests understanding or empathy, such as saying “I see you” or “I understand”, without truly comprehending the user’s emotional state. This “deceptive empathy” can mislead users into feeling supported when the system lacks genuine insight. Additionally, the models sometimes failed to recognize sensitive situations and did not provide appropriate responses, especially in crisis scenarios.

Iftikhar noted that while human therapists also make mistakes, they operate within established frameworks of accountability and professional oversight, unlike AI chatbots. “For human therapists, there are governing boards and mechanisms for providers to be held professionally liable for mistreatment and malpractice,” she said, adding that no similar regulatory structures exist for AI counselors.

The researchers believe AI could still play a role in improving access to mental health resources, especially where professional care is scarce or costly. However, the study underscores that meaningful safeguards and responsible oversight are essential before AI is widely trusted for high‑stakes mental health support.

Leave a Reply